Plateau Analysis: How to Distinguish a Robust Optimum from Overfitting

Article 6 in the "Backtests Without Illusions" series

You ran study.optimize(), Optuna found a parameter set with PnL +87%. You're excited and preparing the strategy for production. Two weeks of live trading later, PnL is around zero. What happened?

The optimizer found the tip of a needle in parameter space. The parameters are perfectly fitted to the historical sequence of trades — but the slightest deviation in market conditions destroys the entire construct. This is classic overfitting, and it could have been detected before launch.

In the previous article we compared coordinate descent with Bayesian optimization and showed why Optuna finds the optimum more efficiently. Today — the next step: how to make sure the found optimum is robust, rather than the result of fitting to noise.

Why Finding the "Best" Parameters Is Only Half the Work

An optimizer navigating a vast multidimensional parameter landscape in search of the true optimum

An optimizer navigating a vast multidimensional parameter landscape in search of the true optimum

Strategy parameter optimization is a search for a maximum in a multidimensional space. The problem is that maximums come in two types:

-

Plateau — a wide flat region where PnL is consistently high across parameter variations. Even if market conditions shift the effective parameters by 10-20%, the strategy will continue to profit.

-

Sharp peak — a narrow summit where PnL is high only at the exact parameter value. A shift of one step collapses profitability. This is almost certainly overfitting: the optimizer found an artifact of historical data, not a stable pattern.

An alpinism metaphor: a plateau is a mountain tableland where you can walk safely. A sharp peak is the tip of a needle where you can only balance.

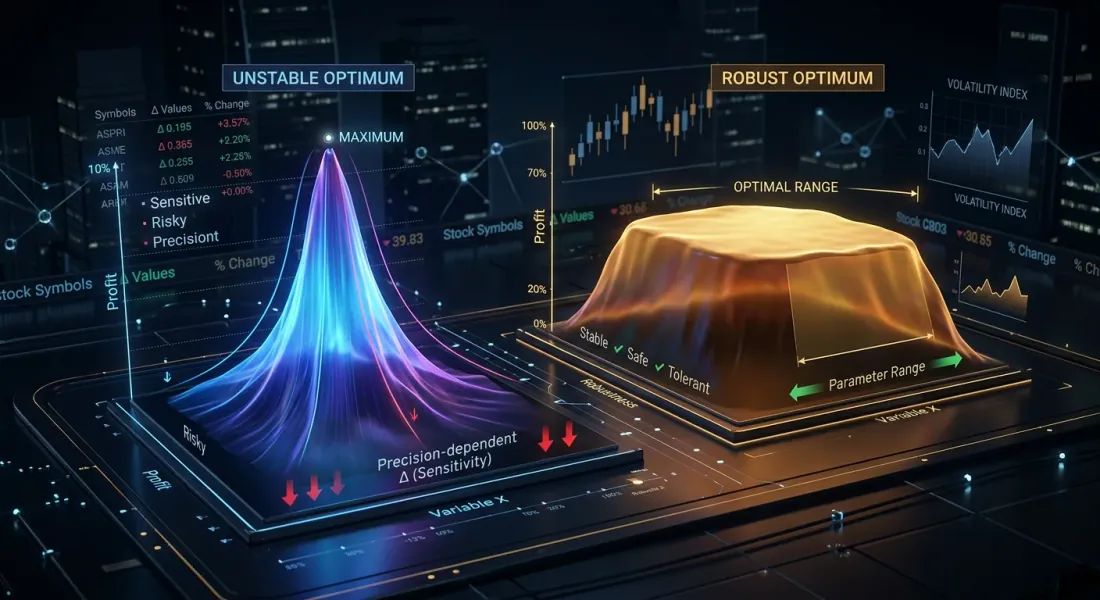

Sharp Peak vs Flat Plateau — Visual Intuition

Left: a robust plateau (wide table mountain with gentle slopes). Right: a fragile sharp peak (needle tip surrounded by deep valleys)

Left: a robust plateau (wide table mountain with gentle slopes). Right: a fragile sharp peak (needle tip surrounded by deep valleys)

Imagine a contour map where the axes are two strategy parameters and the color represents PnL. Two patterns are easy to distinguish visually:

Plateau (robust optimum):

- Wide areas of the same color

- Smooth transitions between PnL levels

- Isolines far apart

- Shifting from the optimum by +/-20% changes PnL by no more than 10%

Imagine a heatmap: in the center — a bright yellow rectangle roughly one-third the size of the entire map. The color gradually transitions to orange, then red toward the edges. The optimum is not a point, but a region.

Sharp peak (overfitting):

- A narrow bright spot surrounded by cold colors

- Abrupt transitions: a collapse right next to the optimum

- Isolines compressed into tight rings

- Shifting by +/-5% drops PnL by 50% or more

Imagine the same heatmap, but in the center — a tiny yellow dot immediately surrounded by blue and purple. A single "correct" parameter combination.

Parameter Sensitivity Analysis

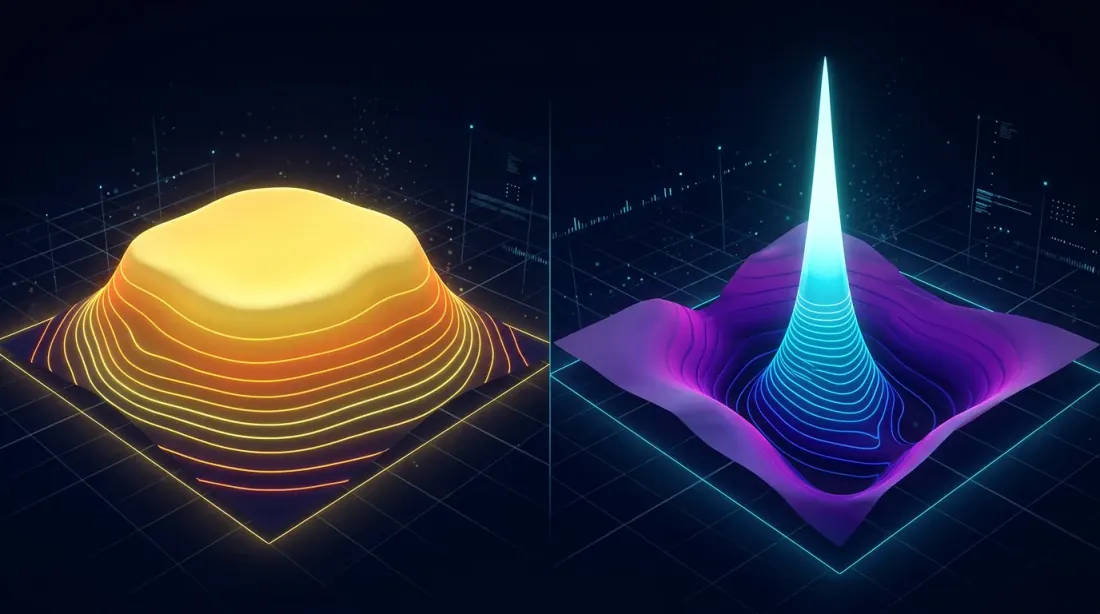

Slice plots showing how PnL depends on individual parameter values — wide bands indicate robustness, narrow clusters indicate fragility

Slice plots showing how PnL depends on individual parameter values — wide bands indicate robustness, narrow clusters indicate fragility

One-Dimensional Analysis: PnL vs One Parameter

The simplest approach — fix all parameters except one and see how PnL depends on its value. Optuna provides plot_slice for this:

import optuna

from optuna.visualization import plot_slice

study = optuna.create_study(direction="maximize")

study.optimize(objective, n_trials=500)

fig = plot_slice(study, params=["htf_entry_sell", "ltf_momentum", "stop_loss_pct"])

fig.show()

What to look for on a slice plot:

- Robust parameter: the point cloud forms a wide horizontal band near the optimum. The best trials are spread across a wide range of parameter values.

- Fragile parameter: the best trials are concentrated in a narrow range. Shifting the parameter by one or two steps — and profitability collapses.

Two-Dimensional Analysis: Contour Plots (Heatmaps)

A contour plot shows the interaction of two parameters simultaneously. This is the key tool for plateau analysis, because parameters rarely act independently — entry and exit thresholds, timeframes, and position sizes are interconnected.

from optuna.visualization import plot_contour

fig = plot_contour(study, params=["htf_entry_sell", "htf_exit_buy"])

fig.show()

A contour plot for a robust parameter pair looks like a topographic map of a hilly plain: smooth wide isolines, large areas of the same color. A contour plot for a fragile pair — like a map of a volcanic cone: tight concentric rings around a single point.

For a strategy with 12 separation parameters, this gives pairwise contour plots. You don't have to study them all — start with the parameters that Optuna rated as most important.

Multidimensional Analysis: Parameter Importance Ranking

Optuna can estimate each parameter's contribution to the objective function:

from optuna.visualization import plot_param_importances

fig = plot_param_importances(study)

fig.show()

The parameter importance chart is a horizontal histogram. Parameters are ranked by their contribution to PnL variance in descending order. The top 3-4 parameters usually explain 70-80% of the variance.

Rule: if a parameter explains less than 2% of PnL variance, its value is practically irrelevant to the result — it's robust by definition. Focus plateau analysis on the top-5 most important parameters.

Optuna Visualization Tools

Contour heatmaps showing parameter interaction landscape alongside importance rankings

Contour heatmaps showing parameter interaction landscape alongside importance rankings

plot_slice — One-Dimensional Slices

import optuna

from optuna.visualization import plot_slice

fig = plot_slice(study, params=[

"htf_entry_sell", "htf_entry_buy",

"ltf_momentum_threshold", "stop_loss_pct",

"take_profit_pct", "trailing_stop_pct"

])

fig.update_layout(height=800, title="Parameter Slice Plots")

fig.show()

The result — a grid of scatter plots. Each subplot shows the objective function value (PnL, Y-axis) against a single parameter value (X-axis). Points are individual trials. For a robust parameter, the best points (highest PnL) are distributed across a wide range of X. For a fragile one — grouped in a narrow column.

plot_contour — Two-Dimensional Contours

from optuna.visualization import plot_contour

important_pairs = [

["htf_entry_sell", "htf_entry_buy"],

["htf_entry_sell", "stop_loss_pct"],

["ltf_momentum_threshold", "take_profit_pct"],

]

for params in important_pairs:

fig = plot_contour(study, params=params)

fig.update_layout(title=f"Contour: {params[0]} vs {params[1]}")

fig.show()

Each contour plot is a heatmap with two parameters on the axes. Color encodes the average PnL in a given region of parameter space. Yellow/green — high PnL, blue/purple — low. Isolines connect points with the same PnL.

plot_param_importances — Parameter Contributions

from optuna.visualization import plot_param_importances

fig = plot_param_importances(

study,

evaluator=optuna.importance.FanovaImportanceEvaluator()

)

fig.show()

fANOVA (functional ANOVA) decomposes the variance of the objective function across parameters and their interactions. This is more powerful than simple correlation because it accounts for nonlinear effects.

Quantitative Plateau Metrics

Sensitivity ratio, plateau width, and robustness score — three metrics that formalize plateau quality

Sensitivity ratio, plateau width, and robustness score — three metrics that formalize plateau quality

Visual assessment is subjective. We need numbers. Here are three metrics that formalize the concept of a "plateau."

Sensitivity Ratio

The ratio of PnL change to parameter change:

where is the PnL drop when parameter deviates from the optimum by .

Interpretation:

- — parameter is robust: a 10% shift causes less than 5% PnL drop

- — moderate sensitivity

- — parameter is fragile: a 10% shift crashes PnL by 20%+

Plateau Width

The width of the parameter region within which PnL stays within of the optimum:

Relative plateau width:

where the denominator is the full search range of the parameter.

Interpretation:

- — the plateau covers more than 30% of the range at the 10% threshold. Robust parameter.

- — the plateau is narrower than 5% of the range. Red flag.

Robustness Score

A combined metric across all parameters:

where is the normalized importance of parameter from fANOVA ().

The product of weighted widths is a strict metric: if even one important parameter has a narrow plateau, will be low. Unimportant parameters (with small ) have almost no effect.

Interpretation:

- — the strategy is robust

- — additional validation required (walk-forward)

- — overfitting is very likely

Python Code for Automated Plateau Detection

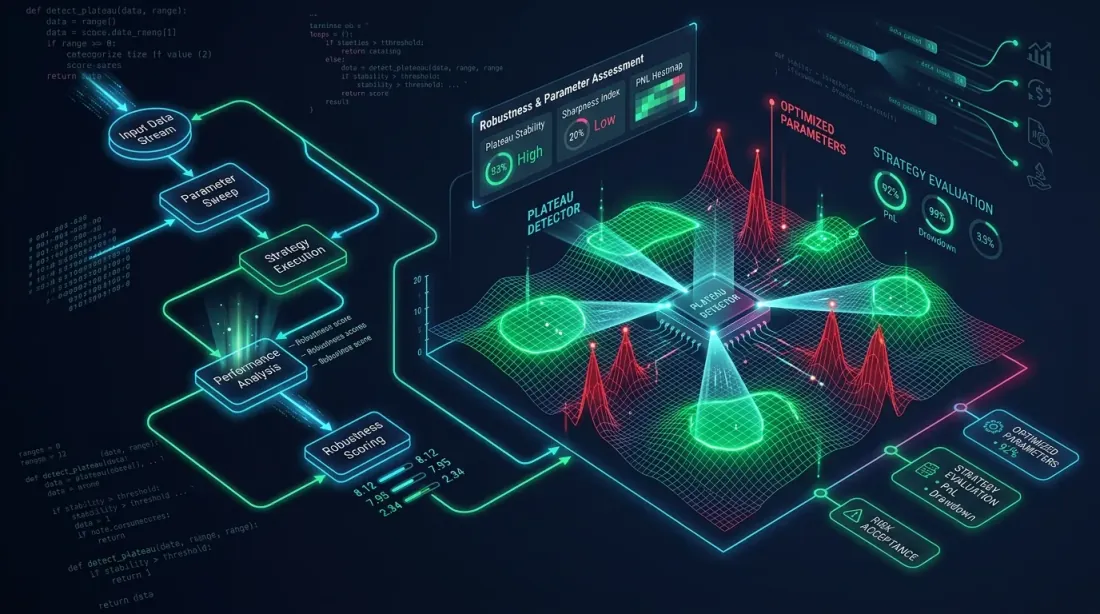

Automated system scanning the parameter landscape to identify robust plateaus and fragile peaks

Automated system scanning the parameter landscape to identify robust plateaus and fragile peaks

import numpy as np

import optuna

from optuna.importance import FanovaImportanceEvaluator

from typing import Dict, List, Tuple

def compute_sensitivity_ratio(

study: optuna.Study,

param_name: str,

n_steps: int = 20,

) -> float:

"""

Compute sensitivity ratio for a single parameter.

Fixes all parameters at their best values, varies param_name,

estimates PnL drop through trial interpolation.

"""

best_trial = study.best_trial

best_value = best_trial.values[0]

best_param = best_trial.params[param_name]

all_trials = [t for t in study.trials if t.state == optuna.trial.TrialState.COMPLETE]

all_trials.sort(key=lambda t: t.values[0], reverse=True)

top_trials = all_trials[:max(10, len(all_trials) // 5)]

param_values = np.array([t.params[param_name] for t in top_trials])

pnl_values = np.array([t.values[0] for t in top_trials])

if best_param == 0 or best_value == 0:

return float('inf')

from numpy.polynomial import polynomial as P

coeffs = np.polyfit(param_values, pnl_values, deg=2)

dpnl_dparam = 2 * coeffs[0] * best_param + coeffs[1]

sensitivity = abs(dpnl_dparam * best_param / best_value)

return sensitivity

def compute_plateau_width(

study: optuna.Study,

param_name: str,

threshold_pct: float = 10.0,

) -> Tuple[float, float]:

"""

Compute absolute and relative plateau width.

Returns:

(absolute_width, relative_width)

"""

best_value = study.best_value

threshold = best_value * (1 - threshold_pct / 100)

trials = [t for t in study.trials if t.state == optuna.trial.TrialState.COMPLETE]

good_trials = [t for t in trials if t.values[0] >= threshold]

if not good_trials:

return 0.0, 0.0

good_params = [t.params[param_name] for t in good_trials]

all_params = [t.params[param_name] for t in trials]

plateau_min = min(good_params)

plateau_max = max(good_params)

absolute_width = plateau_max - plateau_min

search_range = max(all_params) - min(all_params)

relative_width = absolute_width / search_range if search_range > 0 else 0

return absolute_width, relative_width

def compute_robustness_score(

study: optuna.Study,

threshold_pct: float = 10.0,

) -> Dict:

"""

Compute combined robustness score.

Returns:

dict with per-parameter metrics and the final score

"""

evaluator = FanovaImportanceEvaluator()

importances = optuna.importance.get_param_importances(

study, evaluator=evaluator

)

results = {}

total_importance = sum(importances.values())

for param_name, importance in importances.items():

sensitivity = compute_sensitivity_ratio(study, param_name)

abs_width, rel_width = compute_plateau_width(

study, param_name, threshold_pct

)

weight = importance / total_importance

results[param_name] = {

"importance": importance,

"weight": weight,

"sensitivity_ratio": sensitivity,

"plateau_width_abs": abs_width,

"plateau_width_rel": rel_width,

}

log_score = sum(

r["weight"] * np.log(max(r["plateau_width_rel"], 1e-10))

for r in results.values()

)

robustness_score = np.exp(log_score)

return {

"robustness_score": robustness_score,

"parameters": results,

"verdict": (

"robust" if robustness_score > 0.1

else "check" if robustness_score > 0.01

else "overfitting"

),

}

Usage

report = compute_robustness_score(study, threshold_pct=10.0)

print(f"Robustness score: {report['robustness_score']:.4f}")

print(f"Verdict: {report['verdict']}")

print()

for name, metrics in report["parameters"].items():

print(f" {name}:")

print(f" Importance: {metrics['importance']:.3f}")

print(f" Sensitivity: {metrics['sensitivity_ratio']:.2f}")

print(f" Plateau width: {metrics['plateau_width_rel']:.1%}")

print()

Example output:

Robustness score: 0.1482

Verdict: robust

htf_entry_sell:

Importance: 0.312

Sensitivity: 0.38

Plateau width: 42.5%

htf_entry_buy:

Importance: 0.251

Sensitivity: 0.45

Plateau width: 38.1%

ltf_momentum_threshold:

Importance: 0.187

Sensitivity: 1.21

Plateau width: 22.3%

stop_loss_pct:

Importance: 0.098

Sensitivity: 0.67

Plateau width: 31.0%

take_profit_pct:

Importance: 0.072

Sensitivity: 0.89

Plateau width: 28.4%

trailing_delta:

Importance: 0.031

Sensitivity: 0.22

Plateau width: 55.2%

Practical Examples with Separation Strategies

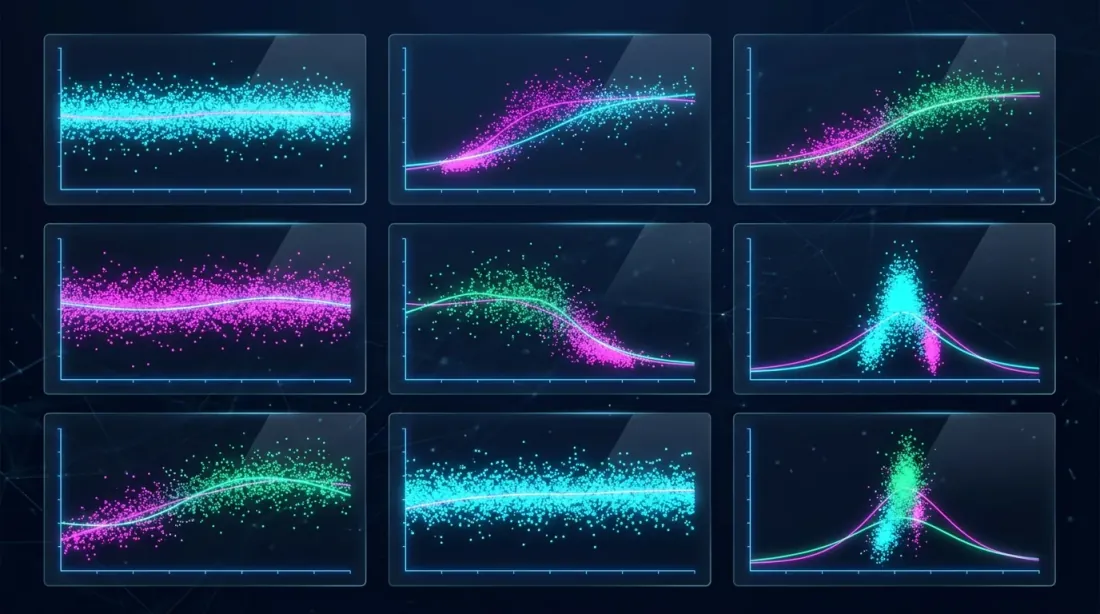

Comparing Strategy A (wide plateau, robust), Strategy B (moderate), and Strategy C (sharp peak, overfitted)

Comparing Strategy A (wide plateau, robust), Strategy B (moderate), and Strategy C (sharp peak, overfitted)

Let's examine three strategies with 12 separation parameters. Each strategy underwent Optuna optimization with 500 trials.

Strategy A (~55% PnL, ~500 trades, ~15% time)

Strategy A's parameters form a wide plateau. Take the key parameter htf_entry_sell:

- Optimal value: 0.020

- PnL at 0.015: +51% (7% drop)

- PnL at 0.025: +49% (11% drop)

- PnL at 0.010: +43% (22% drop)

- PnL at 0.030: +41% (25% drop)

If you imagine this as a one-dimensional plot (X-axis — htf_entry_sell value, Y-axis — PnL), you'll see a gentle parabola with a flat top. The range 0.010-0.030 is the plateau, where PnL stays within +/-25% of the optimum.

Sensitivity ratio: — robust.

Plateau width at 10% threshold: from 0.013 to 0.027, .

Strategy B (~25% PnL, ~40 trades, ~5% time)

Strategy B is optimized on a small number of trades. Parameter htf_entry_sell:

- Optimal value: 0.018

- PnL at 0.015: +24% (4% drop)

- PnL at 0.025: +9% (64% drop)

- PnL at 0.012: +11% (56% drop)

On the plot — an asymmetric and steep curve. The plateau exists only in the narrow range 0.015-0.020. To the right of the optimum — a cliff.

Sensitivity ratio: — moderate sensitivity, but with 40 trades this is a red flag. Small sample + narrow plateau = high probability of overfitting.

Plateau width at 10% threshold: from 0.016 to 0.020, .

Strategy C (~300% PnL, ~400 trades, ~45% time)

Strategy C shows stunning PnL, but plateau analysis reveals problems:

- Optimal value of

htf_entry_sell: 0.022 - PnL at 0.020: +295% (2% drop)

- PnL at 0.025: +142% (53% drop)

- PnL at 0.019: +128% (57% drop)

On the plot — a characteristic "needle": a very high peak at 0.022, sharp drop in all directions. The contour plot would show a bright spot immediately surrounded by cold colors.

Sensitivity ratio: — fragile. Despite 400 trades, the strategy is excessively dependent on the exact value of a single parameter.

Plateau width at 10% threshold: from 0.021 to 0.023, .

Summary Table

| Strategy | PnL | Trades | Sensitivity | Plateau width | Robustness score | Verdict |

|---|---|---|---|---|---|---|

| Strategy A | +55% | ~500 | 0.44 | 35% | 0.148 | Robust |

| Strategy B | +25% | ~40 | 1.64 | 10% | 0.032 | Check (small sample) |

| Strategy C | +300% | ~400 | 3.79 | 5% | 0.008 | Overfitting |

Paradox: Strategy C with PnL +300% has the worst robustness score. Strategy A with a "modest" +55% is the most robust. This is a typical plateau analysis result: impressive numbers often mask fragility.

Confidence intervals for each strategy can additionally be verified through Monte Carlo bootstrap — it will show PnL spread when resampling trades.

3D Visualization and Heatmaps

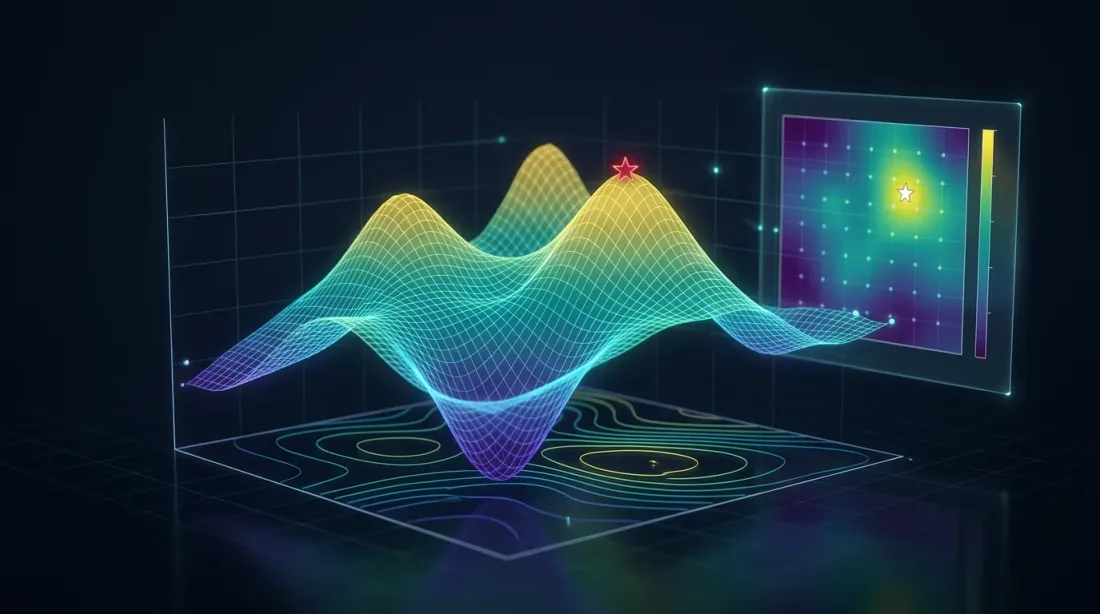

3D surface plot of PnL over two parameters with contour lines projected onto the floor plane

3D surface plot of PnL over two parameters with contour lines projected onto the floor plane

For the most important parameter pairs, it's useful to build a 3D surface and heatmap. This provides intuitive understanding of the landscape shape.

import numpy as np

import matplotlib.pyplot as plt

from matplotlib import cm

from mpl_toolkits.mplot3d import Axes3D

def plot_parameter_landscape(

study: "optuna.Study",

param_x: str,

param_y: str,

grid_size: int = 50,

):

"""

Build a 3D surface plot and heatmap for a pair of parameters.

"""

trials = [t for t in study.trials

if t.state == optuna.trial.TrialState.COMPLETE]

x_vals = np.array([t.params[param_x] for t in trials])

y_vals = np.array([t.params[param_y] for t in trials])

z_vals = np.array([t.values[0] for t in trials])

from scipy.interpolate import griddata

xi = np.linspace(x_vals.min(), x_vals.max(), grid_size)

yi = np.linspace(y_vals.min(), y_vals.max(), grid_size)

Xi, Yi = np.meshgrid(xi, yi)

Zi = griddata((x_vals, y_vals), z_vals, (Xi, Yi), method='cubic')

fig = plt.figure(figsize=(18, 7))

ax1 = fig.add_subplot(121, projection='3d')

surf = ax1.plot_surface(Xi, Yi, Zi, cmap=cm.viridis, alpha=0.85,

edgecolor='none')

ax1.set_xlabel(param_x)

ax1.set_ylabel(param_y)

ax1.set_zlabel('PnL, %')

ax1.set_title('3D Parameter Landscape')

fig.colorbar(surf, ax=ax1, shrink=0.5)

ax2 = fig.add_subplot(122)

hm = ax2.pcolormesh(Xi, Yi, Zi, cmap=cm.viridis, shading='auto')

contours = ax2.contour(Xi, Yi, Zi, levels=10, colors='white',

linewidths=0.8, alpha=0.7)

ax2.clabel(contours, inline=True, fontsize=8, fmt='%.0f%%')

best = study.best_trial

ax2.scatter(best.params[param_x], best.params[param_y],

color='red', s=100, marker='*', zorder=5, label='Optimum')

ax2.set_xlabel(param_x)

ax2.set_ylabel(param_y)

ax2.set_title('Contour Heatmap')

ax2.legend()

fig.colorbar(hm, ax=ax2)

plt.tight_layout()

plt.savefig(f'landscape_{param_x}_vs_{param_y}.png', dpi=150)

plt.show()

A 3D surface plot for a robust strategy resembles a table mountain — a flat top with gentle slopes. For a fragile strategy — a sharp peak, like the Matterhorn. The heatmap complements the 3D view, showing the same information in a top-down projection with isolines.

Red Flags: When Optimization Results Are Suspicious

Warning indicators that signal potential overfitting in optimization results

Warning indicators that signal potential overfitting in optimization results

Eight signs that optimization found overfitting rather than a real pattern:

1. Sensitivity Ratio > 2 for a Key Parameter

If PnL drops more than 20% with a 10% parameter shift — the optimum is fragile.

2. Plateau Width < 10% of the Search Range

If the "good" region occupies less than 10% of the explored range — the optimizer most likely found an artifact.

3. Top-3 Trials Yield PnL 2-3x Above the Median

If the best trials are outliers against the rest rather than the "hilltop" — it's not a plateau.

top_3_mean = np.mean(sorted([t.values[0] for t in study.trials

if t.state == optuna.trial.TrialState.COMPLETE],

reverse=True)[:3])

median_pnl = np.median([t.values[0] for t in study.trials

if t.state == optuna.trial.TrialState.COMPLETE])

outlier_ratio = top_3_mean / median_pnl

if outlier_ratio > 2.5:

print(f"WARNING: Top trials are {outlier_ratio:.1f}x above median — possible overfitting")

4. Low Trade Count (< 50) with High PnL

Small sample + high PnL = high variance in the estimate. Plateau analysis on 40 trades is unreliable in itself. For such strategies, Monte Carlo bootstrap is critical.

5. One "Magic" Parameter Combination

If the contour plot shows a single bright dot amidst a gray field — this isn't a strategy, it's a data-fitted combination.

6. Too Many Parameters

For 12 parameters with 10 values each, the search space contains combinations. Optuna explores ~500. The probability of finding a "good" artifact in such a space is high. The more parameters, the stricter plateau analysis should be.

7. PnL Drops Sharply Out-of-Sample

If in-sample PnL is +87% and walk-forward shows +12% — the optimization fitted parameters to the training period. More about this in the Walk-Forward optimization article.

8. Parameters Are "Pinned" to Range Boundaries

If the optimal value coincides with the search grid boundary — the optimum may lie beyond the range. Expand the range and rerun the optimization.

Automated Plateau Analysis Report

Bringing it all together into a single report generated after each optimization:

import json

from datetime import datetime

def generate_plateau_report(

study: "optuna.Study",

strategy_name: str,

n_trades: int,

threshold_pct: float = 10.0,

) -> dict:

"""

Generate a complete plateau analysis report.

"""

robustness = compute_robustness_score(study, threshold_pct)

red_flags = []

sorted_params = sorted(

robustness["parameters"].items(),

key=lambda x: x[1]["importance"],

reverse=True

)

for name, metrics in sorted_params[:3]:

if metrics["sensitivity_ratio"] > 2.0:

red_flags.append(

f"High sensitivity for {name}: "

f"S={metrics['sensitivity_ratio']:.2f}"

)

for name, metrics in robustness["parameters"].items():

if metrics["plateau_width_rel"] < 0.05:

red_flags.append(

f"Narrow plateau for {name}: "

f"W={metrics['plateau_width_rel']:.1%}"

)

all_values = sorted(

[t.values[0] for t in study.trials

if t.state == optuna.trial.TrialState.COMPLETE],

reverse=True

)

if len(all_values) > 10:

top3 = np.mean(all_values[:3])

med = np.median(all_values)

if med > 0 and top3 / med > 2.5:

red_flags.append(

f"Top trials are outliers: "

f"{top3:.1f} vs median {med:.1f} "

f"({top3/med:.1f}x)"

)

if n_trades < 50:

red_flags.append(f"Low trade count: {n_trades}")

report = {

"strategy": strategy_name,

"timestamp": datetime.now().isoformat(),

"best_pnl": study.best_value,

"n_trials": len(study.trials),

"n_trades": n_trades,

"robustness_score": robustness["robustness_score"],

"verdict": robustness["verdict"],

"red_flags": red_flags,

"parameters": robustness["parameters"],

}

return report

report = generate_plateau_report(

study, strategy_name="Strategy A", n_trades=491

)

print(json.dumps(report, indent=2, default=str))

Example output:

{

"strategy": "Strategy A",

"best_pnl": 55.2,

"n_trials": 500,

"n_trades": 491,

"robustness_score": 0.1482,

"verdict": "robust",

"red_flags": [],

"parameters": {

"htf_entry_sell": {

"importance": 0.312,

"sensitivity_ratio": 0.44,

"plateau_width_rel": 0.35

}

}

}

Relationship with Walk-Forward Validation

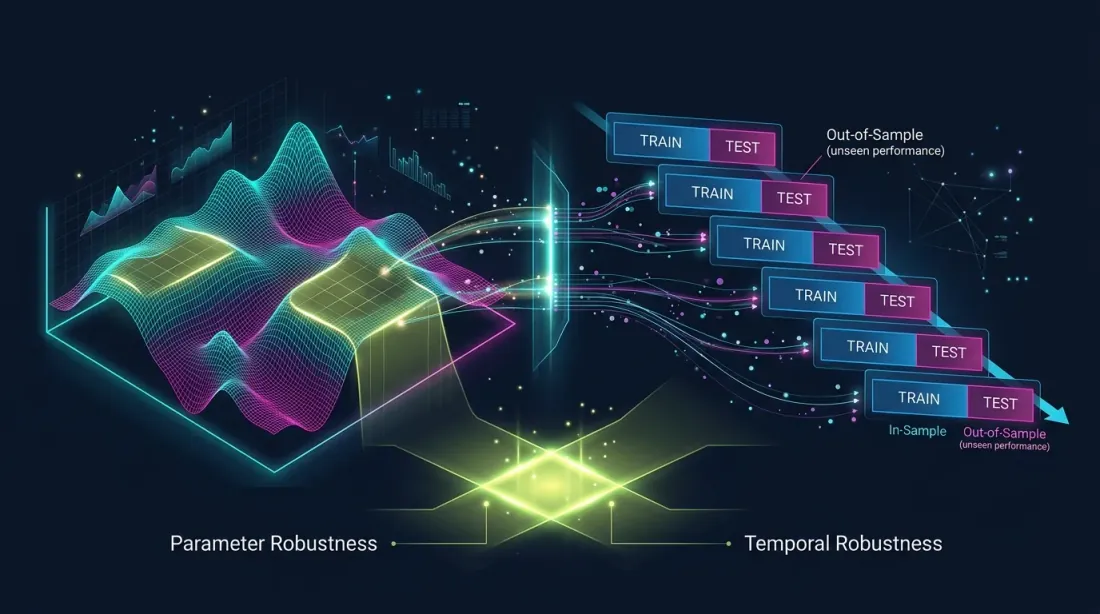

Parametric robustness (plateau analysis) and temporal robustness (walk-forward) as two complementary validation systems

Parametric robustness (plateau analysis) and temporal robustness (walk-forward) as two complementary validation systems

Plateau analysis and walk-forward validation (WFO) are complementary methods:

- Plateau analysis answers the question: "How stable is the optimum to small parameter shifts?" This is a check of parametric robustness.

- Walk-forward answers the question: "Do the parameters work on data the optimizer hasn't seen?" This is a check of temporal robustness.

A strategy can pass plateau analysis (wide plateau) but fail walk-forward (market regime changed). And vice versa — it can pass walk-forward on fixed parameters but have a fragile optimum.

Recommendation: always use both methods. If a strategy passes plateau analysis () and walk-forward () — this is a strong signal of robustness. More details in the Walk-Forward optimization article.

To assess PnL confidence intervals at each stage, apply Monte Carlo bootstrap. And for correctly comparing strategies with different active time, use the PnL per active time metric.

Recommendations

Before Optimization

-

Limit the number of parameters. The fewer parameters — the more reliable the plateau. 5-7 parameters is a reasonable maximum. 12 already requires heightened caution.

-

Set meaningful ranges. Don't set

htf_entry_sellfrom 0.001 to 1.0 if the realistic range is 0.005 to 0.05. Unnecessarily wide ranges create the illusion of a plateau. -

Use enough trials. For 12 parameters, a minimum of 300-500 trials. For reliable plateau analysis — 1000+.

During Optimization

-

Watch convergence. If Optuna continues finding significantly better solutions after 400 trials — the process hasn't converged, and plateau analysis will be unreliable.

-

Use pruning with caution. Aggressive pruning (MedianPruner) can cut trials that look bad in early steps but are important for building a complete landscape picture.

After Optimization

-

Generate the plateau report automatically. Integrate

generate_plateau_report()into the optimization pipeline. Don't rely on visual assessment — use numbers. -

Check the top-5 parameters. If fANOVA shows that 3 parameters explain 80% of the variance — the remaining 9 can be checked less thoroughly.

-

Compare with the baseline strategy. If the strategy with default parameters (no optimization) shows +30%, and the optimized one +55% — the difference is only 25 pp, and the plateau is likely wide. If the default shows 0%, and the optimized one +300% — all profitability depends on precise parameter fitting.

-

Final check — walk-forward. Plateau analysis is a necessary but not sufficient condition for robustness. Always validate out-of-sample.

Conclusion

Parameter optimization is a powerful tool, but without plateau analysis it's a game of roulette. You don't know whether you've found a stable pattern or fitted the model to noise.

Three rules of plateau analysis:

-

Compute the robustness score. The product of weighted plateau widths gives a single number that summarizes the robustness of all parameters. — green light.

-

Sensitivity ratio < 1 for key parameters. If a 10% parameter shift causes less than 10% PnL drop — the parameter is robust. If more — be cautious.

-

Visualize contour plots. No metric can replace understanding the landscape shape. A flat table mountain — good. A sharp needle — bad.

Plateau analysis takes 5 minutes after optimization and can save weeks of unprofitable live trading. It's a mandatory step between study.optimize() and launching the bot.

Useful Links

- Optuna Documentation — Visualization

- Hutter, F., Hoos, H., Leyton-Brown, K. — An Efficient Approach for Assessing Hyperparameter Importance (fANOVA, 2014)

- Pardo, R. — The Evaluation and Optimization of Trading Strategies

- Marcos Lopez de Prado — Advances in Financial Machine Learning, Chapter 11: Dangers of Backtesting

- Bailey, D.H. et al. — The Probability of Backtest Overfitting (2015)

- Optuna — optuna.visualization.plot_contour

- Optuna — optuna.importance.FanovaImportanceEvaluator

- Bergstra, J. & Bengio, Y. — Random Search for Hyper-Parameter Optimization (2012)

Citation

@article{soloviov2026plateauanalysis,

author = {Soloviov, Eugen},

title = {Plateau Analysis: How to Distinguish a Robust Optimum from Overfitting},

year = {2026},

url = {https://marketmaker.cc/en/blog/post/plateau-analysis-overfitting},

version = {0.1.0},

description = {Why finding the best strategy parameters is only half the work. How to visually and quantitatively distinguish a stable plateau from a fragile peak, and why Optuna contour plots are a mandatory step before launching an optimized strategy into production.}

}

MarketMaker.cc Team

Quantitative Research & Strategy

Read More

Walk-Forward Optimization: The Only Honest Strategy Test

Adaptive Drill-Down: Backtest with Variable Granularity from Minutes to Raw Trades