QuestDB for Algorithmic Trading: Architecture That Speaks the Language of Markets

Part 1 of 3 — also available in RU · ZH

Disclaimer: The information provided in this article is for educational and informational purposes only and does not constitute financial, investment, or trading advice. Trading cryptocurrencies involves significant risk of loss.

Hello! Today we're kicking off a three-part deep dive into QuestDB — an open-source time-series database that is quietly becoming the backbone of modern trading infrastructure. This isn't another "top 10 databases" listicle. We're going to get technical, because that's what algorithmic trading demands.

If you've ever struggled with InfluxDB's cardinality limits, fought TimescaleDB's overhead on tick data, or wondered why your PostgreSQL instance can't keep up with a million inserts per second — this series is for you.

Why Time-Series Databases Matter for Trading

Every algorithmic trading system — from a simple grid bot to a full-blown HFT engine — has the same fundamental dependency: data. Specifically, time-ordered data that arrives at enormous velocity and needs to be queryable in real time.

Traditional relational databases weren't designed for this. They excel at ACID transactions and complex joins across normalized schemas, but they choke on the append-heavy, time-partitioned workloads that define financial markets. You end up fighting the database instead of letting it work for you.

Time-series databases flip this paradigm. They assume your data has a timestamp, that it arrives roughly in order, and that your queries will almost always involve time ranges. QuestDB takes this further by being designed specifically with capital markets in mind — its engineering team comes from Tier 1 investment banks (BoA, UBS, HSBC), and it shows in every design decision.

QuestDB at a Glance

QuestDB is an open-source (Apache 2.0) time-series database written in zero-GC Java, C++, and Rust. The "zero-GC" part is critical: the core engine avoids Java's garbage collector entirely, managing memory manually to eliminate the unpredictable latency spikes that plague most JVM-based systems.

Key performance characteristics worth noting:

- Ingestion throughput of millions of rows per second on a single server

- Sub-millisecond query latency through vectorized execution with SIMD instructions

- Native nanosecond-precision timestamps — essential for tick data

- Three-tier storage architecture optimized for both hot and cold data

- SQL interface with powerful time-series extensions

But raw numbers only tell part of the story. What makes QuestDB genuinely interesting for trading systems is how it stores and queries data.

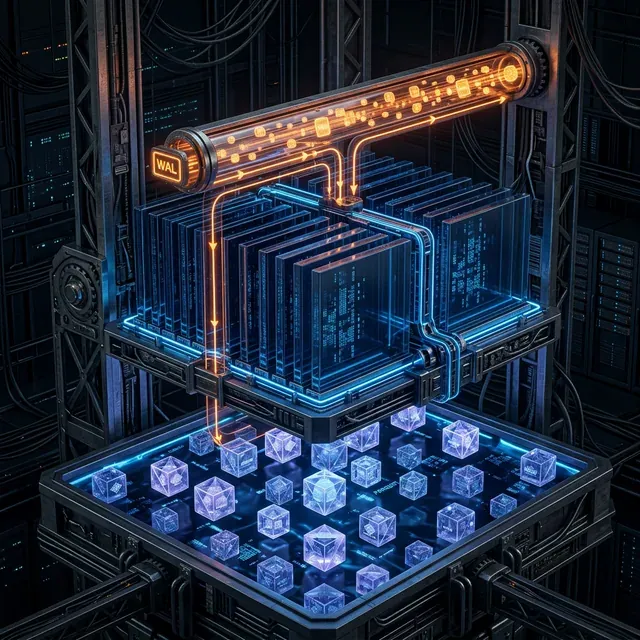

The Three-Tier Storage Engine

This is where QuestDB's architecture gets elegant. Data flows through three distinct tiers, each optimized for a different access pattern:

Tier 1: WAL (Write-Ahead Log)

Incoming data first hits the Write-Ahead Log. This is your ultra-low-latency append layer. Every write is made durable before any processing happens — surviving crashes and power failures without data loss. The WAL is sequential-write-only, which means it plays perfectly with modern SSDs and NVMe drives.

For trading systems, this means your market data ingestion pipeline can blast data into QuestDB without worrying about write amplification or lock contention. Whether you're receiving WebSocket updates from 50 crypto exchanges or processing a firehose of FIX messages, the WAL absorbs it all.

The WAL is also asynchronously shipped to object storage, enabling new replicas to bootstrap quickly and read the same history — a crucial property for disaster recovery in production trading environments.

Tier 2: Columnar Storage

Asynchronously, data is time-ordered, deduplicated, and written into QuestDB's native columnar format. This format is time-partitioned (by hour, day, week, or month depending on your data volume) and immediately queryable.

The columnar layout is what enables QuestDB's query performance. When you ask for the average price of BTC-USD over the last hour, the engine reads only the price column from the relevant time partitions — not entire rows. Combined with SIMD-vectorized execution across multiple cores, this yields the sub-millisecond query times that make real-time dashboards and live strategy calculations feasible.

Each table is stored as separate files per column, with fixed-size types getting one file per column and variable-size types (like VARCHAR) using two. This layout is purpose-built for the kinds of sequential scans that dominate time-series analytics.

Tier 3: Object Storage (Parquet)

Here's where cost management meets interoperability. Older partitions are automatically converted to Apache Parquet format and shipped to object storage (S3, Azure Blob, GCS). But — and this is the key innovation — you can still query them transparently through QuestDB's SQL interface. The query planner spans all three tiers seamlessly.

For algorithmic traders, this means you can keep years of historical tick data accessible for backtesting without paying for terabytes of SSD storage. Your Python backtesting framework can read the same Parquet files directly via Polars, Pandas, or Spark — no database export needed. Your ML training pipeline can access the same data through Arrow/ADBC for in-memory processing. Zero vendor lock-in.

This is a radically different proposition from proprietary database formats that trap your data behind a single query interface.

Schema Design for Trading Data

QuestDB's schema design philosophy revolves around a few critical concepts that align perfectly with trading data:

Designated Timestamp

Every time-series table needs a designated timestamp column. This isn't just metadata — it determines the physical storage order and enables partition pruning. Without it, you lose most of QuestDB's performance benefits:

CREATE TABLE trades (

timestamp TIMESTAMP,

symbol SYMBOL,

side SYMBOL,

price DOUBLE,

quantity DOUBLE

) TIMESTAMP(timestamp) PARTITION BY DAY;

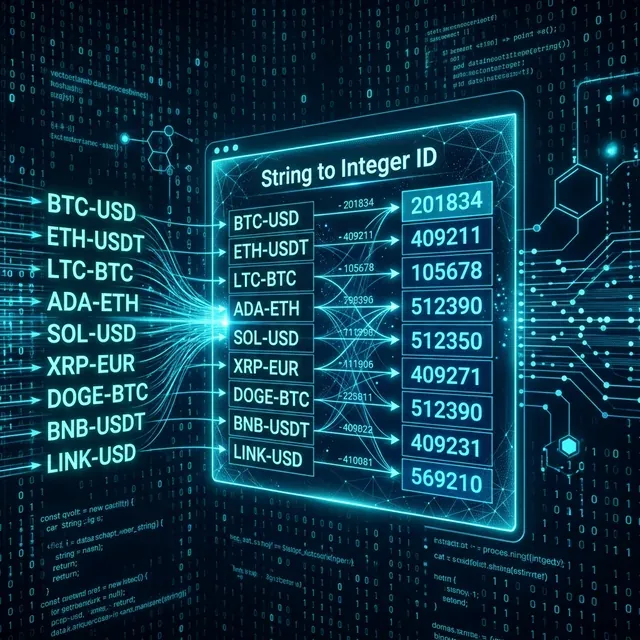

SYMBOL Type

The SYMBOL type is QuestDB's answer to the high-cardinality string problem. Trading pairs like "BTC-USD" or "ETH-USDT" are stored as integer-indexed dictionary entries. Filtering and grouping on SYMBOL columns is dramatically faster than on VARCHAR — QuestDB resolves string comparisons to integer comparisons at compile time.

If you're ingesting data from 100+ exchanges with thousands of trading pairs, this optimization alone can be the difference between a query taking 5ms and 500ms.

Partitioning Strategy

Partition size should match your data volume. High-frequency tick data (millions of rows per day per symbol) should use PARTITION BY HOUR. Lower-volume end-of-day data works fine with PARTITION BY MONTH. The goal is to keep individual partitions manageable while enabling efficient pruning:

-- High-volume tick data

CREATE TABLE ticks (...) TIMESTAMP(ts) PARTITION BY HOUR;

-- Lower-volume daily prices

CREATE TABLE eod_prices (...) TIMESTAMP(ts) PARTITION BY MONTH;

Data Deduplication

In real-world trading systems, duplicate data is inevitable. Network retransmissions, redundant exchange connections for reliability, replay of historical data during recovery — all of these produce duplicates. QuestDB handles this natively: when enabled, deduplication replaces matching rows with new versions, and only truly new rows are inserted.

The performance impact depends on your data pattern. If timestamps are mostly unique across rows, the overhead is minimal. The most demanding case is when many rows share the same timestamp and need deduplication on additional columns — common in order book snapshots where multiple price levels update simultaneously.

Production Deployment Considerations

QuestDB's production clients include B3 (Latin America's largest stock exchange), One Trading (regulated crypto exchange ingesting up to 4 million rows per second), Laser Digital (Nomura Group), and numerous Tier 1 banks and hedge funds.

Some practical deployment notes:

- QuestDB supports the PostgreSQL wire protocol, so most PG client libraries work out of the box

- For high-throughput ingestion, the InfluxDB Line Protocol (ILP) over HTTP or TCP is the recommended path

- Protocol Version 2 (from QuestDB 9.0+) adds binary encoding for arrays and doubles, significantly reducing bandwidth and server-side processing overhead

- Automatic schema creation and concurrent schema changes let you handle multiple data streams with on-the-fly modifications

The Enterprise edition adds RBAC (including column-level permissions), TLS encryption, automatic replication and failover, tiered storage to cloud object stores, and dedicated support with SLAs. For regulated environments, these are table stakes.

What's Coming in Parts 2 and 3

In Part 2, we'll dive deep into QuestDB's time-series SQL extensions — SAMPLE BY, ASOF JOIN, HORIZON JOIN, WINDOW JOIN, and LATEST ON — with real-world trading examples. These aren't incremental improvements over standard SQL; they're fundamentally different tools that eliminate entire categories of complex queries.

In Part 3, we'll cover the practical trading applications: materialized views for real-time OHLC, 2D arrays for order book analytics, and the full architecture of a QuestDB-powered algorithmic trading platform.

Stay tuned.

Citation

@software{soloviov2025questdb_algotrading_p1,

author = {Soloviov, Eugen},

title = {QuestDB for Algorithmic Trading: Architecture That Speaks the Language of Markets},

year = {2025},

url = {https://marketmaker.cc/en/blog/post/questdb-algotrading-architecture},

version = {0.1.0},

description = {Deep dive into QuestDB's three-tier storage architecture and schema design for algorithmic trading systems.}

}

MarketMaker.cc Team

Сандык изилдөөлөр жана стратегия

Read More

QuestDB for Algorithmic Trading: SQL Extensions That Change the Game

QuestDB for Algorithmic Trading: From Order Books to Production Architecture